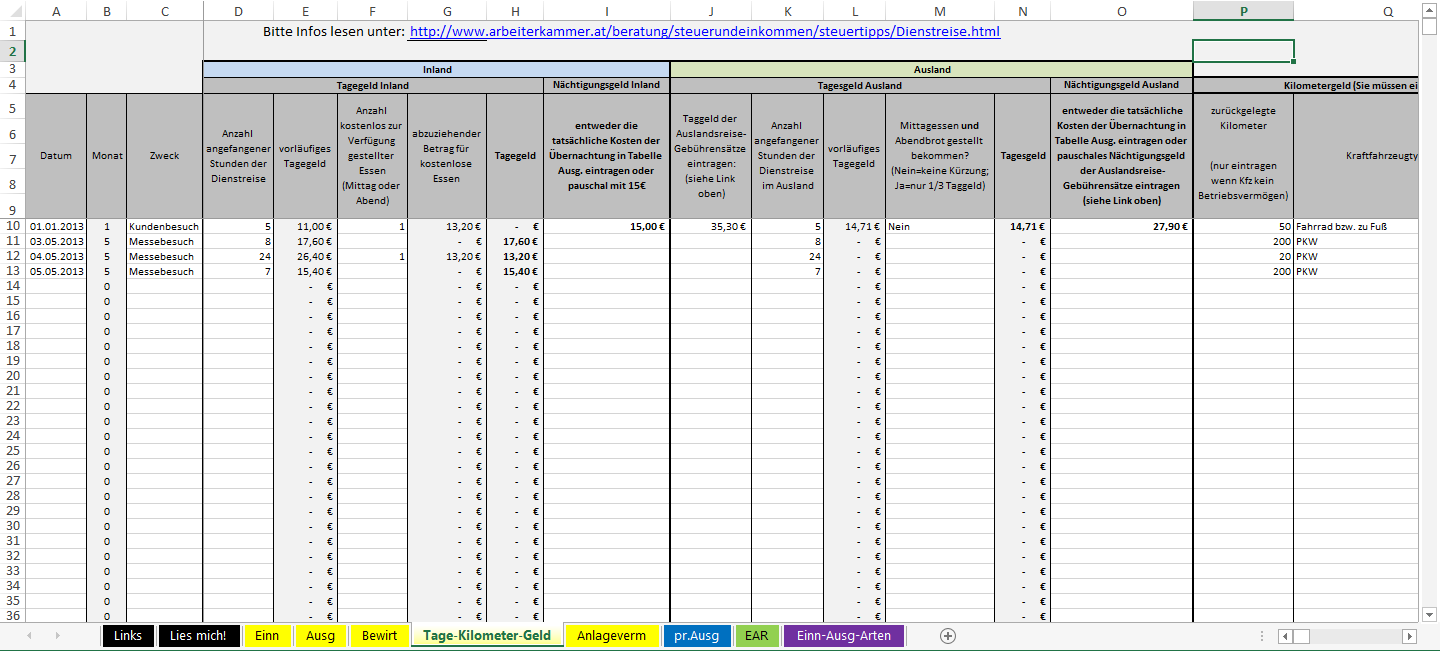

I like it because of the fact it shows a clear overview of all the scrapers you have active and you can scrape multiple URLs at once. It is a more sophisticated tool compared to Kimono. By following their easy step-by-step plan you select the data you want to scrape and the tool does the rest. Import.io is a browser based web scraping tool. The disadvantage of this tool is the fact you can’t upload multiple URLs at once. I like the facts that their learning curve is not that steep and it doesn’t look like you need a PHD in engineering to use their software. Once you have pointed out the data you need, you can set how often and when you want the data to be collected. Kimono has two easy ways to scrape specific URLs: just paste the URL into their website or use their bookmark. – Click here to download the example script. If you are not used to creating Xpath references, use the Scraper for Chrome plugin by selecting the data point and see the Xpath reference directly. Since it is PHP, use a cronjob to hourly, daily or weekly scrape the desired data.

I’m not going to explain how this function works, but with the script below you can easily scrape a list of URLs. This plugin is really basic but does the job it is build for: fast and easy screen scraping. You can select a specific data point, a price, a rating etc and then use your browser menu: click Scrape Similar and you will get multiple options to export or copy your data to Excel or Google Docs. Scraper is a simple data mining extension for Google Chrome™ that is useful for online research when you need to quickly analyze data in spreadsheet form. Just a short disclaimer: use these tools on your own risk! Scraping websites could generate high numbers of pageviews and with that, using bandwidth from the website you are scraping. I even have some cases it is costing to much time to create and run database queries and my personal build PHP scraper is faster so I just wanted to share some tools that could be helpful. This smart web scraping solution can connect with SQL and MySQL Server database to store data there directly for further processing and analysis.I’ve been creating a lot of (data driven) creative content lately and one of the things I like to do is gathering as much data as I can from public sources. The output file can be parsed according to your specifications and formatted as defined with user preset selections.

The project will be run over and the results will be exported in the format you’ve selected. Besides, you can work with groups of similar page elements.Ī flow chart is created for the project to show how the process will go. FMiner can generate URLs – Create URLs with the scraped data. Data element is defined using an FMiner relative XPath expression, which a user can edit if he needs it. Then you should add “capture content” and assign columns to them. To run a project you should first create it and begin to “record” it in the integrated browser, then go through all the steps in the internet browser, so that they could be recorded.Īs soon as you get to the page you need to scrape, create an action “scrape page”, and indicate a table for the data. Equipped with a powerful visual design tool, FMiner captures every step and creates a model of interaction with the target site and the overall process of the identified data extraction.įMiner uses a WebKit browser as a core engine, so it allows it to extract information from online resources of various kinds, including dynamic sites with AJAX or JavaScript.īesides, it can operate as a web macro tool that records and simulates human actions on the internet browser, goes through the website, and gathers complete content structures whether they are search results or product catalogs.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed